|

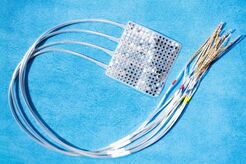

by Alex Vikoulov [Posted July, 31, 2019 03.25 pm PST] Researchers at the University of California, San Francisco (UCSF), have successfully synthesized human thoughts into real-time speech. This paves the way for consumer devices that can respond to thoughts without the need for the user to audibly state commands. In April, researchers at UCSF announced a ‘neural speech prosthesis’ that could produce relatively natural-sounding speech from decoded brain activity. In a study published today, they revealed that they continued that work and have successfully decoded brain activity as speech in real-time. They have been able to turn brain signals for speech into written sentences. The project aims to transform how patients with severe disabilities can communicate in the future.  Electrodes, temporarily placed on the surface of patients’ brains for few days to map the origins of their seizures before neurosurgery. Photo by Noah Berger Electrodes, temporarily placed on the surface of patients’ brains for few days to map the origins of their seizures before neurosurgery. Photo by Noah Berger The breakthrough is the first to show how the intention to say specific words can be extracted from brain activity and converted into text rapidly enough to keep pace with natural conversation. But the current brain-reading software only works with certain sentences that it can be trained on, although scientists believe a more powerful system could be designed later on. The project’s primary goal is to create a product that allows paralyzed individuals to communicate more fluidly than using existing devices that pick up eye movements and muscle twitches to control a virtual keyboard. “To date there is no speech prosthetic system that allows users to have interactions on the rapid timescale of a human conversation,” says Edward Chang, a neurosurgeon and lead researcher of the study. The study was possible thanks to three epilepsy patients who were about to have neurosurgery for their condition. Before their operations went ahead, all three had a small patch of tiny electrodes placed directly on the brain for at least a week to map the origins of their seizures, the researchers state in their paper, published in Nature Communications. The patients reportedly allowed to record their brain activity while each was asked nine questions and asked to read a list of 24 potential responses. Chang's team then built computer models that matched the patterns of brain activity to the questions and the answers. Researchers were able to decode produced and perceived utterances with accuracy rates as high as 61% and 76%, respectively. "Real-time processing of brain activity has been used to decode simple speech sounds, but this is the first time this approach has been used to identify spoken words and phrases," says the study’s researcher David Moses. "It’s important to keep in mind that we achieved this using a very limited vocabulary, but in future studies we hope to increase the flexibility as well as the accuracy of what we can translate from brain activity." Another goal of the project is to read “imagined speech,” or sentences spoken in the mind. Currently, the system detects brain signals that are sent to move the lips, tongue, jaw and larynx – in other words, the machinery of speech. However, for some patients with injuries or neurodegenerative disease, these signals may not be sufficient, and more sophisticated ways of reading sentences in the brain will be required. The research was funded under the Sponsored Academic Research Agreement with Facebook, which announced a ‘typing-by-brain’ project in 2017. While the medical industry is seeking the technology as a potential way to enable handicapped people to ‘speak’ using thoughts, Facebook appears to be eyeing such technology for the development of brain-controlled augmented reality (AR) glasses. -Alex Vikoulov READ MORE: Team IDs Spoken Words and Phrases in Real Time from Brain’s Speech Signals [University of California San Francisco] Keywords: University of California, UCSF, neural speech prosthesis, brain activity, natural conversation, Edward Chang, Nature Communications, David Moses, imagined speech, typing by brain, Facebook, augmented reality, AR

Image Credits: Shutterstock, UCSF Follow us ↴

0 Comments

Leave a Reply. |

Disclaimere_News™ delivers the most urgent News of the Day that we find relevant to the main theme of EcstadelicNET such as a new, cutting-edge scientific research, technological breakthroughs and emerging trends. Some material may be fully or partially from outside sources. The Top Stories section, on the other hand, contains only original content written by affiliated authors. Take me to Top Stories. Categories

All

The Cybernetic Theory of Mind by Alex M. Vikoulov (2022): eBook Series

The Syntellect Hypothesis: Five Paradigms of the Mind's Evolution by Alex M. Vikoulov (2020): eBook Paperback Hardcover Audiobook The Omega Singularity: Universal Mind & The Fractal Multiverse by Alex M. Vikoulov (2022): eBook THEOGENESIS: Transdimensional Propagation & Universal Expansion by Alex M. Vikoulov (2021): eBook The Cybernetic Singularity: The Syntellect Emergence by Alex M. Vikoulov (2021): eBook TECHNOCULTURE: The Rise of Man by Alex M. Vikoulov (2020) eBook NOOGENESIS: Computational Biology by Alex M. Vikoulov (2020): eBook The Ouroboros Code: Reality's Digital Alchemy Self-Simulation Bridging Science and Spirituality by Antonin Tuynman (2019) eBook Paperback The Science and Philosophy of Information by Alex M. Vikoulov (2019): eBook Series Theology of Digital Physics: Phenomenal Consciousness, The Cosmic Self & The Pantheistic Interpretation of Our Holographic Reality by Alex M. Vikoulov (2019) eBook The Intelligence Supernova: Essays on Cybernetic Transhumanism, The Simulation Singularity & The Syntellect Emergence by Alex M. Vikoulov (2019) eBook The Physics of Time: D-Theory of Time & Temporal Mechanics by Alex M. Vikoulov (2019): eBook The Origins of Us: Evolutionary Emergence and The Omega Point Cosmology by Alex M. Vikoulov (2019): eBook More Than An Algorithm: Exploring the gap between natural evolution and digitally computed artificial intelligence by Antonin Tuynman (2019): eBook Editor-in-ChiefAlex M. Vikoulov is a futurist, evolutionary cyberneticist and philosopher, editor-in-chief at Ecstadelic Media Group, filmmaker, essayist, author of many books, including the 2019-2020 best-seller "The Syntellect Hypothesis: Five Paradigms of the Mind's Evolution." Our Public Forums

Our Custom GPTs

Alex Vikoulov AGI (Premium*)

Be Part of Our Network! *Subscribe to Premium Access Make a Donation Syndicate Content Write a Paid Review Submit Your Article Submit Your Press Release Submit Your e-News Contact Us

|